Physical Setup

I'm going to use a Raspberry Pi along with an ADC (Analogue to Digital Converter) I purchased to collect temperature data. I've connected directly to the RPi GPIO port using a T-Cobbler Breakout Kit.

Materials List:

- ADC - Microchip Technology MCP3008-I/P

- Temperature Sensor - Texas Instruments LM35DZ/LFT1

- BreadBoard - Bud Industries BB-32621

- 24AWG Hookup Wire

- RaspBerry Pi Mod B

- T-Cobbler Breakout Kit

|

| Ye olde soldering iron |

Test Setup

I mounted the MCP3008 on the breadboard and wired up a rough test set-up using a potentiometer as the analogue voltage input for the ADC. For the wiring I referred to an online tutorial that didn't involve using the Serial Peripheral Interface at first, but eventually found this recipe and decided to learn more about the SPI with Analogue sensors on the Raspberry Pi using an MCP3008• MCP3008 VDD 3.3V

• MCP3008 VREF 3.3V

• MCP3008 AGND GROUND

• MCP3008 CLK GPIO11 (P1-23)

• MCP3008 DOUT GPIO9 (P1-21)

• MCP3008 DIN GPIO10 (P1-19)

• MCP3008 CS GPIO8 (P1-24)

• MCP3008 DGND GROUND

|

| MCP3008 DIP Pin Out Diagram |

And here is the finished product wired entirely in purple. Pin 1 (the white stripe) on the ribbon cable corresponds to the P1 on the RPi mainboard. Now to run some smoke tests.

I started with Matt's code from the blog mentioned above and then added a few minor changes to meet my requirements. I used the Pi WebIDE to write and debug my code; it works very well in tandem with my existing BitBucket account by adding a simple OAuth consumer to my settings.

Here is a sample of the code I tested my setup with:

I had originally planned on creating a connector class, to read from the generated (JSON) text file; and this was a useful first step, but I have since decided to send the data directly to a database on the DAQ. I think this will give me a little more flexibility as I further develop the system.

Update

After some testing with the previous setup and fiddling with the code a bit I decided to make a few changes and finish up my circuit. So, as I mentioned before, I added a database to record my sensor data, setup a temperature sensor using the TMP35/LM35, cleaned up my wiring a bit and made some additions to my code.Changes in this update:

- Cleaned up my wiring

- Added the temperature sensor circuit

- Updated my python script to write directly to SQLite

- Changed the conversion factors on my input voltage and temperature calculations

- Added a crontab entry to run my script every hour

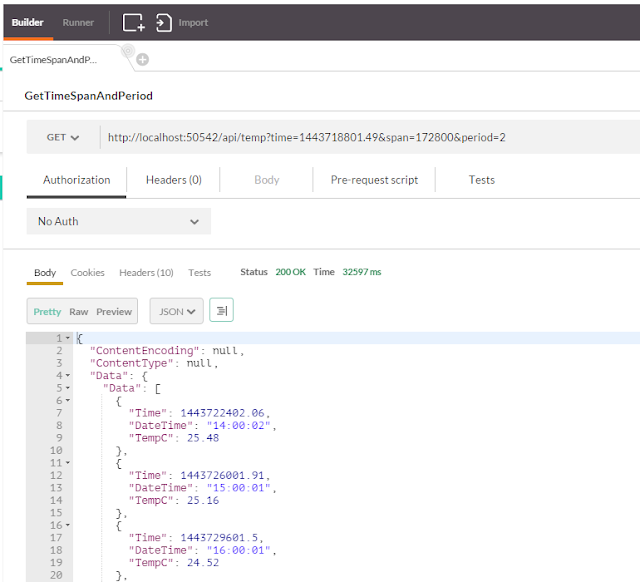

The main additions to the code for writing to a SQLite table are fairly simple. I made my SQL script in two parts just to make it a little easier to look at; the first string gives the table and variable/column names, followed by the values to insert.

#import the library

import sqlite3

# Create output sql strings

sql_insert = "INSERT INTO {tn} ({tc}, {dc}, {lc}, {vc}, {tmp}) ".\

format(tn=table_name, tc=time_col, dc=date_col, lc=level_col, vc=volt_col, tmp=tempc_col)

sql_values = "VALUES ({tc}, '{dc}', {lc}, {vc}, {tmp})".\

format(tc=timeStamp, dc=strTime, lc=level, vc=volts, tmp=temp)

sql_string = sql_insert + sql_values

# execute the script, commit changes and disconnect

c.execute(sql_string)

conn.commit()

conn.close()

Here is the entire code (hosted in Gist):

I also needed to change the conversion factors from the original sample code as they had been written for the TMP36 and the TMP35 has a different response range, though it is the same linear factor.

Using a vRef of 3.3V this gives me a range from 0V to 1.25V

volts = (data * vRef) / float(1023)

Which translates to a temperature range from 0°C to 125 °C

temp = ((data * vRef)/float(1023))*100

The TMP35 is rated most accurate between 10°C and 125°C

As my final step, I added a Cron job to run my code once every hour on the hour; this should give me enough data to work with in creating my API and give me some ideas for the way I want to query and present the data.

I edited the Crontab on the Raspberry Pi from the command line using:

crontab -e

# my entry

0 * * * * python /home/pi/projects/TemperatureSampler.py

They have a little primer about Crontab here on the RPi website:

Cron And Crontab

Anyway, until I add more sensors (or maybe an IR camera) that's it for the data acquisition side of my project. What I'm working on now is finishing up the skeleton of the Web API and starting to fill it out with a few features... and eventually documenting it for another post.

It's still in progress but you can find the code in my GitHub repository.